Kenneth Ives February 1, 2024

As a major driver of generative AI (GenAI) development, the technology sector is sometimes overlooked as an industry experiencing AI-fueled disruption itself. While healthcare, financial services, and entertainment media are currently thought to be undergoing the most transformation, as a software engineer I’m not immune to GenAI’s disruptive reverberations. Since late 2022, when ChatGPT and other consumer-facing releases of the advanced tech started hitting the market, family and friends have continually asked if I’m worried about being replaced by GenAI. While I can’t predict the future, I am passionate about exploring the present. Recently, I began work on a personal open-source project using Spring Boot and Vue.js, and was keen to see just how powerful ChatGPT could be as a coding assistant, collaborating from the ground up.

Prior to this project, I had some experience using ChatGPT, but nothing of this scale. At the time of the project’s start, ChatGPT-4 is what was available to me, and I selected it versus other language processing tools because it was highly accessible, easy to set up, and was simply most familiar to me. With this post I want to explore what I felt were the strengths and limitations of ChatGPT in this use case, as well as my recommendations for using it as a development tool.

The ChatGPT Boost: Coding and Design Assistant

Right out of the gate, one of ChatGPT’s most evident strengths is as a pure boilerplate accelerator. The real grind when it comes to building a new project from the ground up isn’t grappling with difficult engineering decisions or navigating complex business logic. More frequently, it’s the sheer volume of setup tasks that bogs down velocity. Though usually uncomplicated and standardized, boilerplate toil can sap any human’s ability to create – and we are creators!

What ChatGPT thrives on creating is boilerplate. Getting the chatbot to spit out all the initial configuration files, walk through the steps for setting up your dependencies, and write the initial controllers and models with “hello world” data is a massive boost to development efficiency. My personal project, the S3 Media Archival Application, benefited a great deal from this. ChatGPT walked me through all the setup, creating the standard repository structure, a basic CRUD API from which I could build off – everything my self-hosted web application, designed to facilitate archiving media data to S3 Deep Archive, would need. It was all set up in a few short hours, despite it being my first time using these frameworks.

@SpringBootApplication

@EnableJms

@EnableScheduling

public class MediaArchivalApplication {

/**

* Main method which starts the application.

*

* @param args Command line arguments passed to the application.

* @throws Exception If the application fails to start.

*/

public static void main(String[] args) throws Exception {

SpringApplication.run(MediaArchivalApplication.class, args);

}

}Of course, if ChatGPT were just a boilerplate generator, then it wouldn’t be half as useful as it actually ends up being. With the correct prompt outlining good requirements, ChatGPT is fully capable of producing complicated algorithms. They often require tweaks to get working, but the speed of the results is undeniable. It’s much easier to fix up code that’s 95% correct but maybe missing an edge case, than it is to think through all the loops and branching statements yourself, from scratch.

One of the more complex things I got ChatGPT to produce was a recursive algorithm for scanning through a directory based on a provided path. I outlined each requirement one by one, and ChatGPT gave me an algorithm that, upon inspection, was mostly correct. In order to make adjustments, I pointed out where I recognized errors and asked ChatGPT to repair them. After each adjustment, I asked the chatbot to walk me through what would happen for a given input. After some back-and-forth making those adjustments, that was that. In a half hour, one of the more complicated pieces of logic in my code was done.

private void scanDirectory(String currentPath, String[] segments, LibraryModel

library) {

File currentDir = new File(currentPath);

// Check if the current directory exists

if (!currentDir.exists() || !currentDir.isDirectory()) {

return;

}

// If there are no more segments left, create/update media objects for

// all files and directories

if (segments.length == 1) {

File[] filesAndDirs = currentDir.listFiles();

if (filesAndDirs != null) {

for (File fileOrDir : filesAndDirs) {

createOrUpdateMediaObject(fileOrDir, library);

}

}

return;

}

// Get the next segment

String nextSegment = segments[1];

String[] remainingSegments = Arrays.copyOfRange(segments, 1,

segments.length);

if (nextSegment.startsWith("${") && nextSegment.endsWith("}")) {

File[] subDirs = currentDir.listFiles(File::isDirectory);

if (subDirs != null) {

for (File subDir : subDirs) {

scanDirectory(currentPath + "/" + subDir.getName(),

remainingSegments, library);

}

}

} else {

scanDirectory(currentPath + "/" + nextSegment, remainingSegments,

library);

}

}The most surprising strength of ChatGPT, to me, was as a standards and design assistant. Whereas writing code is something I was wholly expecting ChatGPT to be able to do, this was different. I did not initially set out to write my frontend in Vue.js; I actually did not have a frontend framework in mind going into this project. So I gave ChatGPT the technical context behind my project, and asked for frontend suggestions. It gave a very good summary of three options: React, Angular, and Vue. I sustained the dialogue, asking questions about the frameworks based on the information ChatGPT provided, and then I settled on Vue.

But it goes deeper than just helping with design decisions. Since this was my first project using Vue, I didn’t know how the project is usually organized. ChatGPT was able to give me the standard directory and code structure. It was able to tell me what kind of component separations are usually used in Vue and why. This kind of idea-bouncing, insight-providing exchange demonstrates ChatGPT’s multi-faceted value – which far surpasses that of a mere personal code generator.

Navigating the Limitations: Errors and Specificity

Simple errors are ChatGPT’s most obvious and critical drawback. ChatGPT makes mistakes. Sometimes it’s hallucinating – presenting made-up information as fact. Other times it might just be a matter of spitting out buggy code. Usually, these mistakes are easy to spot, and represent more frustration and annoyance than a dangerous problem. But there are instances when the error is subtle, and easy to miss. This is much more insidious, as these are the kinds of mistakes that could easily make their way into your end product.

A good example would be when ChatGPT wrote me a race condition. The code fired off a message to an asynchronous queue, then made modifications to the same database that was being read by the new asynchronous thread. All that was needed to fix this code was for it to simply send the message after the database modifications. It was such an easy error to let slip through, and it took hours of debugging before I identified the strange behavior as a race condition and found the offending code.

This is not a mistake I usually would have made were I writing the function from scratch – I know to do the logic first and then fire off the asynchronous message. Yet with such a high volume of ChatGPT output and fresh code that I was looking at, I saw all the right lines were there – and had not caught the placement error right away. This is similar to how the “transposed letter effect” highlights the human brain’s ability to correctly identify words, even when the middle letters are in the wrong order. To counter this, make sure you have unit and integration testing across code, so that the output follows best practices for task-driven development.

@PostMapping("/archive")

public ResponseEntity<String> archiveMediaObjects(@RequestBody List<String> paths) {

for (String path : paths) {

MediaModel media = mediaRepository.findByPath(path);

if (!media.isArchiving() && !media.isRecovering()) {

media.setArchiving(true);

media.setUploadProgress(-1);

media.setTarring(false);

mediaRepository.save(media);

jmsTemplate.convertAndSend("archivingQueue",

media.getPath());

}

}

return ResponseEntity.ok("Archive requests sent successfully");

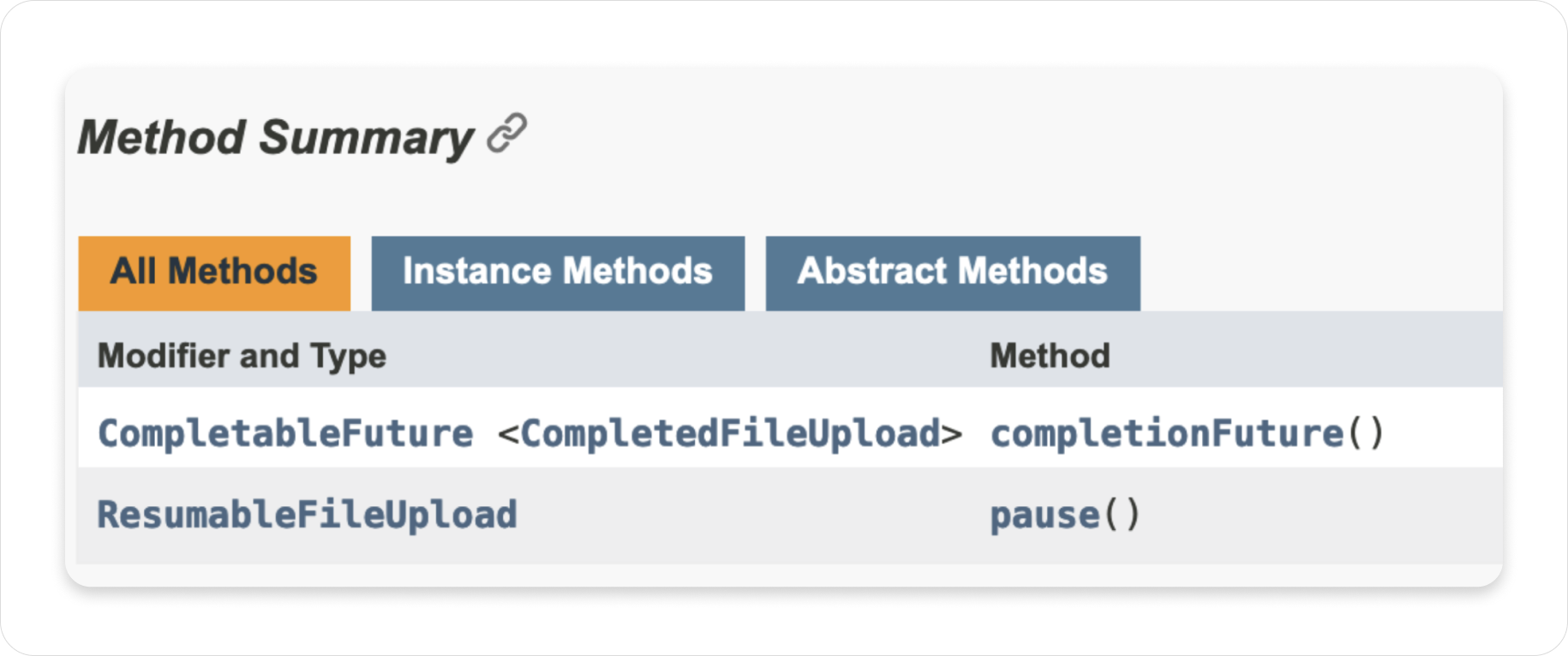

}Mistakes aside, another limitation is that there are times when ChatGPT is fully capable of giving you the answer you’re looking for, but you’re not capable of asking it the right question. I was at my wits’ end while trying to figure out how to cancel upload jobs with the S3 asynchronous transfer manager. I had asked ChatGPT how to cancel, stop, or abort – and all the solutions it gave me were either made up, didn’t work, or were just plain impractical. So I moved on to more traditional methods: I read, read some more, and then re-read the AWS SDK documentation. Eventually, I discovered that the UploadFile function on the transfer manager does not have cancellation, it only has “pause” – which if you never resume it, is of course, the same as cancellation. If you ask ChatGPT how to pause an upload, it immediately gives you the right answer. But not knowing that very specific vocabulary difference was all it took for its responses on the matter to have been completely useless.

Coding Smart with ChatGPT: My Top Recommendations

While the upsides I experienced generating code with ChatGPT far outweigh these limitations, I do think that in order to ensure optimal outcomes, it’s important to follow a few best practices.

Retain Multiple Conversations

The first recommendation I have for effective work with ChatGPT is to keep many different small conversations. ChatGPT tends to forget context very quickly. I set up a conversation to do code review, got bogged down asking it about some issues with unit tests, and by the time I asked it to review the next file, it had completely forgotten we were doing code review. By keeping many different conversations for each unique issue, you minimize the chances of ChatGPT missing context you think it should know (which can lead to errors). This approach also allows you to easily find previous answers and code, an exercise which in long, drawn-out conversations becomes very tedious.

Pro tip: As part of keeping multiple conversations going about the same application, I had a copy-and-pastable prompt beginning that outlined the context of all my requests, by stating my application purpose, its main tech stack, etc. Though not yet universally available at the time of my experimentation, newer editions of ChatGPT allow you to accomplish this with custom instructions.

Keep Prompts Tight and Specific

Next recommendation is to keep your prompts as concise and clear as possible. Good prompts are your key to success. Tell ChatGPT what you want it to do, exactly. Pretend it’s one of those genies that would cartoonishly bestow upon the less articulate exactly what they wish for. I find the higher the level of specificity, the less the AI tends to hallucinate. As an example of this, imagine asking ChatGPT to unit test a file. It might produce two tests, covering a true scenario and a false scenario of a boolean method. But there might be multiple ways for a method to fail, and each one of those should be tested. If you ask instead, “write me unit tests that cover all possible failure conditions for this boolean method,” you’ll probably get what you want on the first try.

Remember: ChatGPT is an Accelerator, Not a Fellow Engineer

My final recommendation is to keep in mind that ChatGPT (and GenAI in general) is so far not a substitute for software engineering knowledge. It’s a development accelerator. I do not believe you can build something with ChatGPT that you couldn’t build on your own. You can just do it a heck of a lot faster with ChatGPT.

I am an experienced backend developer, and with ChatGPT’s help, I am very happy with my server code. It’s tight, of good quality, and well tested. On the other hand, I am much less experienced and comfortable with frontend work, and the frontend of my project is considerably messier, and much less professional. I used ChatGPT to help me write both, but the differentiator was my own knowledge and experience. I couldn’t write effective, specific, and requirement-driven prompts without the prerequisite frontend knowledge, and it was easy for me to miss mistakes, or not recognize when ChatGPT was producing code that didn’t follow standards. ChatGPT can spit out a lot of good building blocks, but you still need to know how to assemble them properly and cleanly.

All in all, my experience with ChatGPT on this project was very positive. I was blown away by the volume of work it allowed me to accomplish in such a short time. As I grew more comfortable using and prompting ChatGPT, I was able to integrate into my workflow much better, leading to even faster acceleration. But at the same time, ChatGPT was not able to act as the software developer. It is not able to build anything software-related, without a competent developer guiding it and assembling its output.

I would absolutely use it again for starting a project from scratch, where I can go to the tool methodically for each building block, making sure I’m asking it to solve a problem I can explain well. For the first time, I’m also much more interested in trying out some other AI-powered engineering assistants. I’m excited to see what the future holds for the power of generative AI. I still can’t predict that – but I could do with telling my friends and family to stop worrying so much.

Interested in learning more about accelerating code generation with AI? Check out our workshop below!